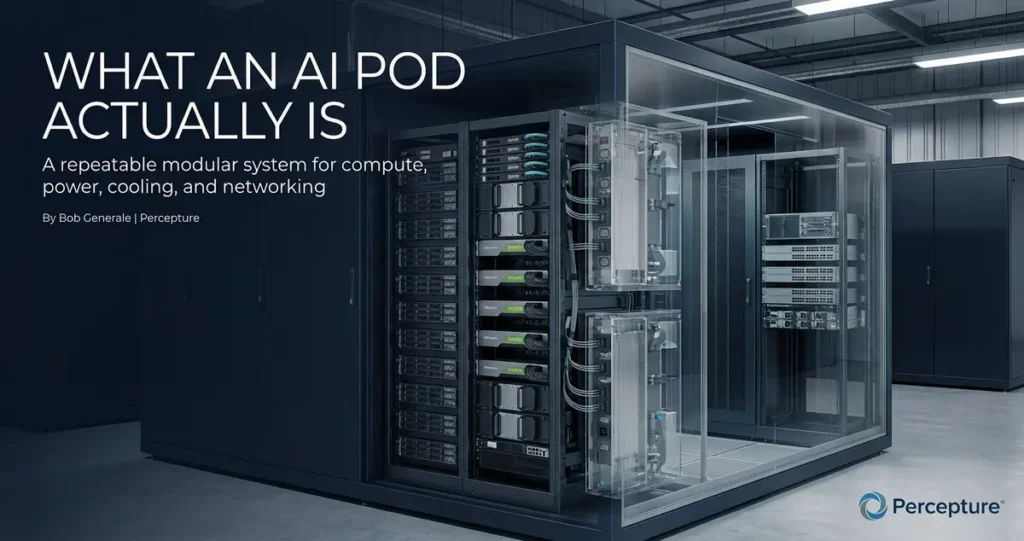

An AI pod should not be judged by branding alone. Buyers should look at power integration, modular design, deployment speed, interconnection readiness, scalability, and how well the infrastructure fits real inference workloads.

Table of Contents

- What an AI pod actually is

- AI pods vs containerized builds

- Why the market gets the language wrong

- Why modular AI deployment matters

- Where IXP pods and inference infrastructure fit

- 4 things buyers should verify

- Common mistakes buyers make

- FAQ

What is an AI pod?

An AI pod is a modular unit of AI infrastructure that combines compute, power, cooling, and supporting systems into a more repeatable deployment model. But not every AI pod is built the same way — and not every containerized system should be treated as true modular infrastructure.

What an AI pod actually is

The term AI pod is everywhere right now. But not every product using that label is the same thing. As part of Percepture’s ongoing work in data center marketing strategy, we’ve seen how loose terminology creates real confusion for buyers trying to make serious infrastructure decisions. This article cuts through the noise. It explains what a real modular AI build looks like, how it differs from a containerized setup, and what questions buyers should be asking before they commit. An AI pod is not just a box with GPUs in it.

At its core, an AI pod is a modular infrastructure unit designed to bring together compute, power, cooling, and networking into a repeatable, deployable package. The key word is repeatable. A true AI pod is designed to be deployed again and again — in different locations, at different scales — without reinventing the architecture each time.

That repeatability is what separates a real AI pod from a one-off build.

Think of it like a prefabricated building module. You design it once. You test it once. Then you deploy it wherever you need it. The design doesn’t change. The integration doesn’t change. The performance profile doesn’t change.

That’s the promise of modular AI infrastructure. And it’s a meaningful promise — when the product actually delivers it.

Why language matters here:

The word “pod” has been borrowed from software (think Kubernetes pods) and applied loosely to hardware. That creates confusion. A software pod and a hardware AI pod are very different things. Buyers who don’t know the difference can end up comparing products that aren’t actually comparable.

AI pods vs containerized builds

Here’s where the market gets sloppy.

A containerized build puts hardware inside a shipping container. That’s it. The container is the enclosure. It doesn’t automatically mean the system is modular, repeatable, or optimized for AI inference workloads.

An AI pod, done right, is a purpose-built modular unit. The power integration is designed in. The thermal management is designed in. The interconnection architecture is designed in. It’s not just hardware in a box — it’s a system.

Comparison Chart

| Model | What It Usually Includes | Main Strength | Main Limitation | Best Fit |

|---|---|---|---|---|

| AI Pod | Compute, power, cooling, networking — integrated | Repeatability, deployment speed, inference-ready design | Higher upfront design investment | Scalable AI deployments, edge AI, distributed inference |

| Containerized Build | Hardware inside a shipping container enclosure | Portability, familiar form factor | Not always modular or inference-optimized | Temporary deployments, general compute |

| Modular AI Deployment | Repeatable pod-based rollout across sites | Speed, consistency, scalability | Requires upfront architecture planning | Multi-site AI infrastructure programs |

| Modular Data Center | Full facility built from modular components | Faster build time vs traditional data centers | Still a facility-scale investment | Greenfield AI infrastructure, hyperscale edge |

| IXP Pod | Interconnection-focused modular infrastructure | Low-latency access, network-adjacent placement | Specialized use case | Inference at the edge, distributed AI workloads |

The takeaway: packaging is not architecture. A container is a form factor. A modular AI pod is a system design philosophy.

Why the market gets the language wrong

Vendor marketing has blurred the lines.

When a product is called an “AI pod,” it could mean:

- A containerized server rack

- A prefabricated modular unit

- A software-defined compute cluster

- A branded hyperscaler product

- A purpose-built inference node

All of these are real products. None of them are the same thing.

The problem is that buyers are comparing them as if they are. And vendors — some of them — are happy to let that confusion persist.

Here’s the honest version: “containerized” is not the same as “modular.” A container is a physical enclosure. Modular means the system is designed to be repeated, scaled, and integrated without custom engineering every time.

A truly modular AI build has:

- Standardized power integration

- Repeatable thermal design

- Defined interconnection architecture

- Documented deployment process

A containerized build may have none of those things. It may just be a rack in a box.

Buyers who ask better questions get better infrastructure. The question isn’t “is it a pod?” The question is: “Is it actually modular, and what does that mean for my deployment?”

Why modular AI deployment matters

Speed is the first reason.

When infrastructure is truly modular, deployment timelines compress. You’re not designing from scratch at each site. You’re replicating a proven system. That matters when AI workloads are scaling fast and time-to-deployment is a competitive variable.

Repeatability is the second reason.

A modular AI deployment model means your second site looks like your first. Your tenth site looks like your first. That consistency reduces risk. It reduces cost. It reduces the number of things that can go wrong.

Scalability is the third reason.

Modular infrastructure scales in units. You add pods, not custom builds. That’s a fundamentally different cost and complexity curve than traditional infrastructure expansion.

Inference workloads make this more important, not less.

AI inference, running trained models in production, has different infrastructure requirements than training. Inference is latency-sensitive. It’s often distributed. It needs to be close to the data and close to the user. That means deployment architecture matters more, not less, as AI moves from training to production.

A modular AI deployment model built for inference needs:

- Low-latency interconnection

- Distributed deployment capability

- Power and thermal design that supports dense GPU workloads

- The ability to replicate quickly across locations

That’s not a container. That’s a system.

According to the Uptime Institute, infrastructure deployment timelines are one of the top three factors affecting AI project ROI. Speed to deployment isn’t just an operational metric — it’s a business outcome.

Where IXP pods and inference infrastructure fit

Interconnection changes the conversation.

An IXP pod, infrastructure deployed at or near an internet exchange point, is designed for one specific advantage: low-latency access to network traffic. For AI inference workloads, that matters.

When a model needs to respond in milliseconds, the distance between the inference node and the end user is a real variable. Placing modular AI infrastructure at interconnection points reduces that distance. It reduces latency. It improves performance.

This is why distributed inference is becoming a real infrastructure strategy, not just a theoretical one. Instead of centralizing all inference in one large facility, organizations are distributing inference nodes closer to where the requests originate.

Modular AI deployment is what makes distributed inference practical. You can’t distribute inference at scale if every deployment requires custom engineering. You need a repeatable, modular unit that can be placed at multiple interconnection points without reinventing the architecture each time.

As the boundaries between data centers and carrier hotels blur, companies require a specialized telecom marketing strategy to clearly communicate the value of low-latency edge positioning to modern enterprises.

4 things buyers should verify before choosing an AI pod or modular AI build

Don’t evaluate AI infrastructure on branding. Providing clear, verifiable specs on power and thermal integration is the most effective way to improve data center lead generation for high-density AI environments. Evaluate it on these four things.

1. Power and thermal integration

Is the power system designed into the pod, or bolted on? True modular AI infrastructure has integrated power and thermal management. It’s not an afterthought. Dense GPU workloads generate significant heat. If the thermal design isn’t purpose-built for AI, performance and reliability will suffer. his often starts at the power entry point; for instance, choosing the right UL 891 switchboards for AI data centers is a critical success factor for maintaining stability.

2. Deployment speed and modular repeatability

How long does it take to deploy the first unit? How long does it take to deploy the tenth? If the answer to both questions isn’t roughly the same, the system isn’t truly modular. Ask vendors for real deployment timelines — not marketing estimates.

3. Interconnection and inference readiness

Where can this infrastructure be placed? Can it be deployed at or near interconnection points? Is it designed for low-latency inference workloads, or is it optimized for bulk training? These are different use cases with different infrastructure requirements. Make sure the product matches your actual workload.

4. Scalability without excess complexity

Can you scale from one pod to ten without a major re-architecture? Modular infrastructure should scale in units. If scaling requires significant custom engineering at each step, the system isn’t as modular as advertised. Ask for a scaling roadmap — not just a product sheet.

Common mistakes buyers make

These are the patterns we see most often.

Assuming every AI pod is the same.

The label doesn’t define the product. Two vendors can both call their product an “AI pod” and deliver completely different infrastructure. Evaluate the system, not the name.

Confusing containers with true modular infrastructure.

A container is a form factor. Modular is a design philosophy. They are not the same thing. A containerized build can be modular — but it isn’t automatically modular just because it’s in a container.

Focusing only on GPUs.

GPU count is one variable. Power integration, thermal design, interconnection architecture, and deployment repeatability are equally important. A system with great GPUs and poor power integration will underperform.

Ignoring power integration.

Power is the constraint that most buyers underestimate. Dense AI workloads require significant, stable power. If the power system isn’t designed for the workload, the workload will be throttled. Ask about power density per rack and ensure your switchboard manufacturer understands the unique demand profiles of 2026-era AI infrastructure.

Overlooking deployment speed.

Time-to-deployment is a business variable. If your AI infrastructure takes 18 months to deploy, your competitors who deploy in 6 months have a real advantage. Modular AI builds exist, in part, to compress that timeline.

Not defining the end use case clearly.

Training infrastructure and inference infrastructure are different. Edge AI infrastructure and centralized AI infrastructure are different. Define your use case before you evaluate products. Otherwise, you’re comparing things that aren’t meant to do the same job.

As part of Percepture’s digital infrastructure PR strategy work, we’ve seen how clearly defining the use case changes which infrastructure options even belong in the conversation.

Chart: What Matters Most in Modular AI Deployment

| Priority | Share |

|---|---|

| Power & Thermal Integration | 28% |

| Deployment Speed | 24% |

| Interconnection Readiness | 22% |

| Scalability | 16% |

| Modular Repeatability | 10% |

Frequently Asked Questions About AI Pods

Q: What is an AI pod?

An AI pod is a modular unit of AI infrastructure that integrates compute, power, cooling, and networking into a repeatable deployment package. It is designed to be deployed consistently across multiple locations without custom engineering at each site.

Q: What is the difference between an AI pod and a containerized build?

A containerized build places hardware inside a shipping container. An AI pod is a purpose-built modular system with integrated power, thermal management, and interconnection architecture. A container is a form factor. A modular AI pod is a system design.

Q: What is modular AI deployment?

Modular AI deployment is the practice of rolling out AI infrastructure using repeatable, standardized units — called pods — rather than custom-engineered builds at each site. It enables faster deployment, consistent performance, and easier scaling.

Q: Are modular AI builds the same as modular data centers?

Not exactly. A modular data center is a full facility built from modular components. A modular AI build is a unit-level deployment model. Modular AI builds can be deployed inside modular data centers, but they can also be deployed at edge sites, IXP locations, and other environments.

Q: What is an IXP pod?

An IXP pod is modular AI infrastructure deployed at or near an internet exchange point. It is designed to support low-latency inference workloads by placing compute closer to network traffic and end users.

Q: Why does inference infrastructure change deployment decisions?

AI inference is latency-sensitive. It needs to be close to the data and the user. That makes deployment location and architecture more important than in bulk training environments. Modular AI deployment supports distributed inference by enabling repeatable, fast deployment at multiple locations.

Q: What should buyers look for in modular AI infrastructure?

Buyers should evaluate power and thermal integration, deployment speed, modular repeatability, interconnection readiness, and scalability. GPU count alone is not a sufficient evaluation criterion.

Q: Is bigger always better for AI infrastructure?

No. Bigger infrastructure concentrates workloads and can increase latency for distributed use cases. For inference-heavy AI applications, distributed modular deployments often outperform centralized large-scale builds on the metrics that matter most: latency, deployment speed, and operational flexibility.

Connect with us today!

About Moonshot

Moonshot is a leader in high-density power and modular infrastructure, specializing in the engineering of AI Pods that bridge the gap between complex GPU requirements and rapid site deployment. They are a leading switchboard manufacturer and innovator in high-density power systems for modular infrastructure. They specialize in the engineering of AI Pods that bridge the gap between complex GPU requirements and rapid site deployment. While the market often confuses simple containers with advanced systems, Moonshot focuses on integrated AI deployment solutions that include purpose-built, UL 891 switchboards and thermal management designed for 2026-era inference workloads

About the Author: Bob Generale

Bob Generale is the President of Percepture and a leader in data center marketing. With over a decade of experience in digital infrastructure, Bob helps brands navigate the shift to AI-driven search while improving data center lead generation through technical authority and strategic PR.